CachePilot

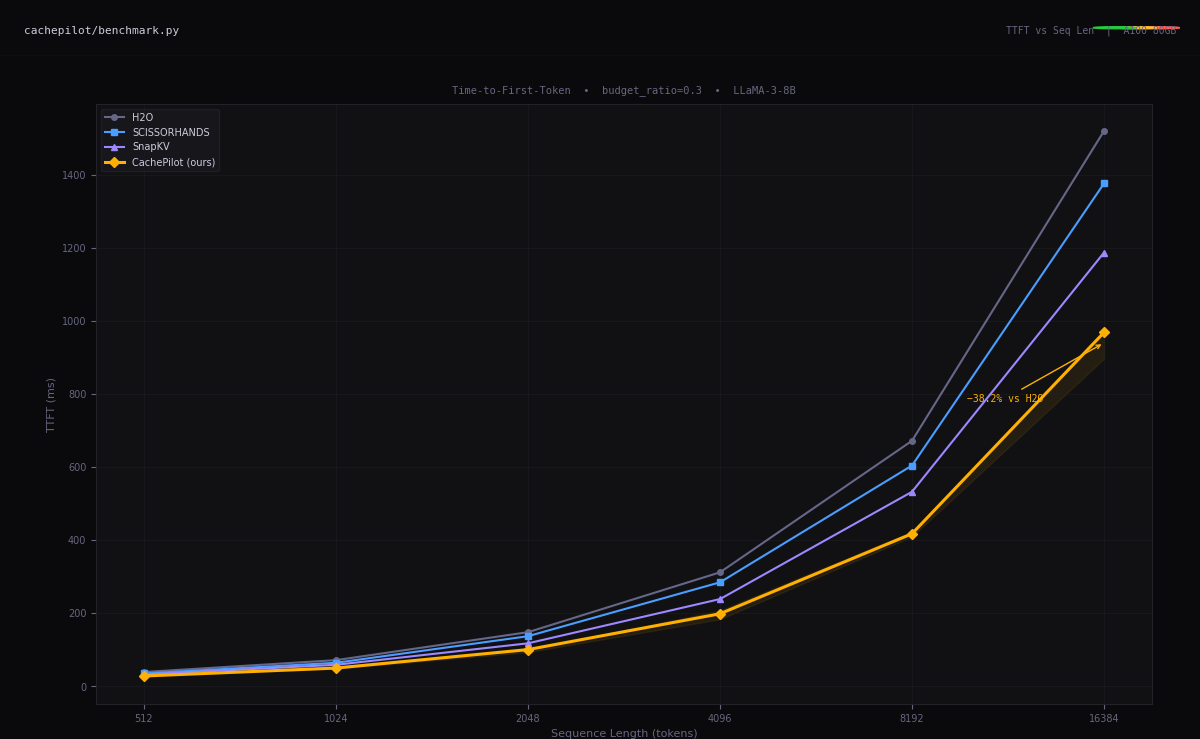

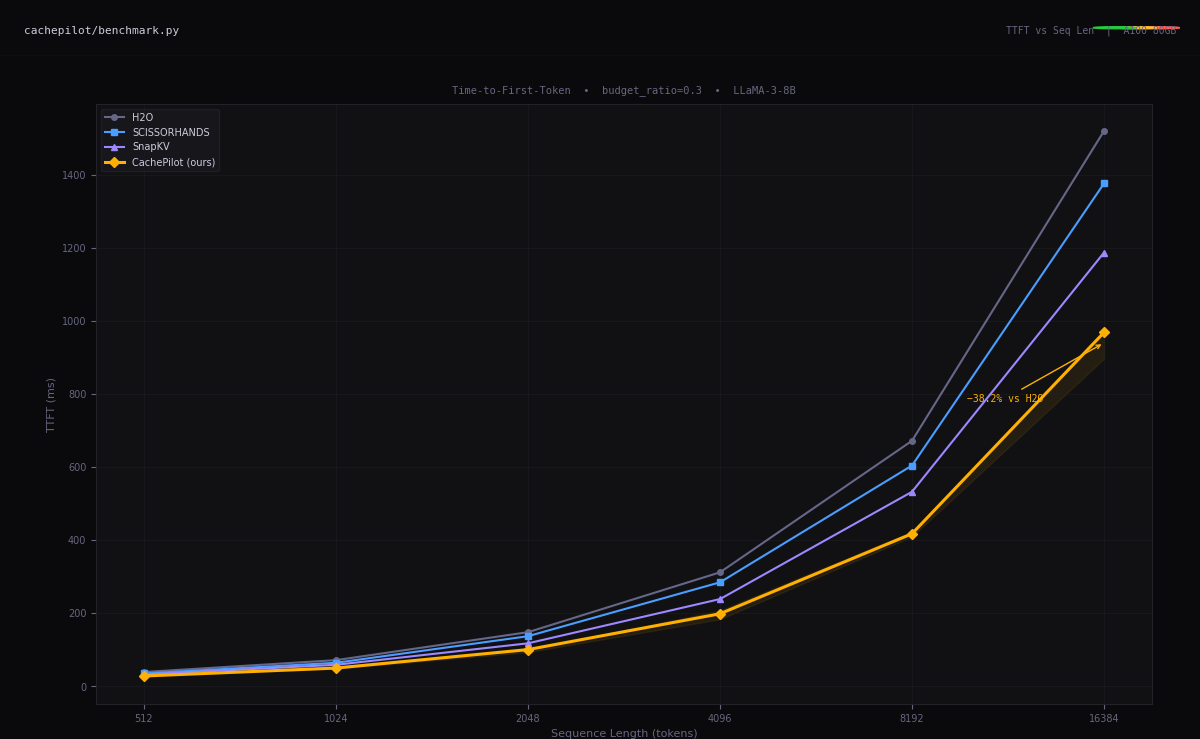

KV cache eviction policy engine for LLM inference. Benchmarked against H2O, SCISSORHANDS, and SnapKV under real GPU memory pressure — TTFT, throughput, and perplexity degradation measured end-to-end.

PROJECTS — 2023 / 2025

AI inference, distributed systems, 6G networking, robotics. Every project begins with a bottleneck and ends with a benchmark.

KV cache eviction policy engine for LLM inference. Benchmarked against H2O, SCISSORHANDS, and SnapKV under real GPU memory pressure — TTFT, throughput, and perplexity degradation measured end-to-end.

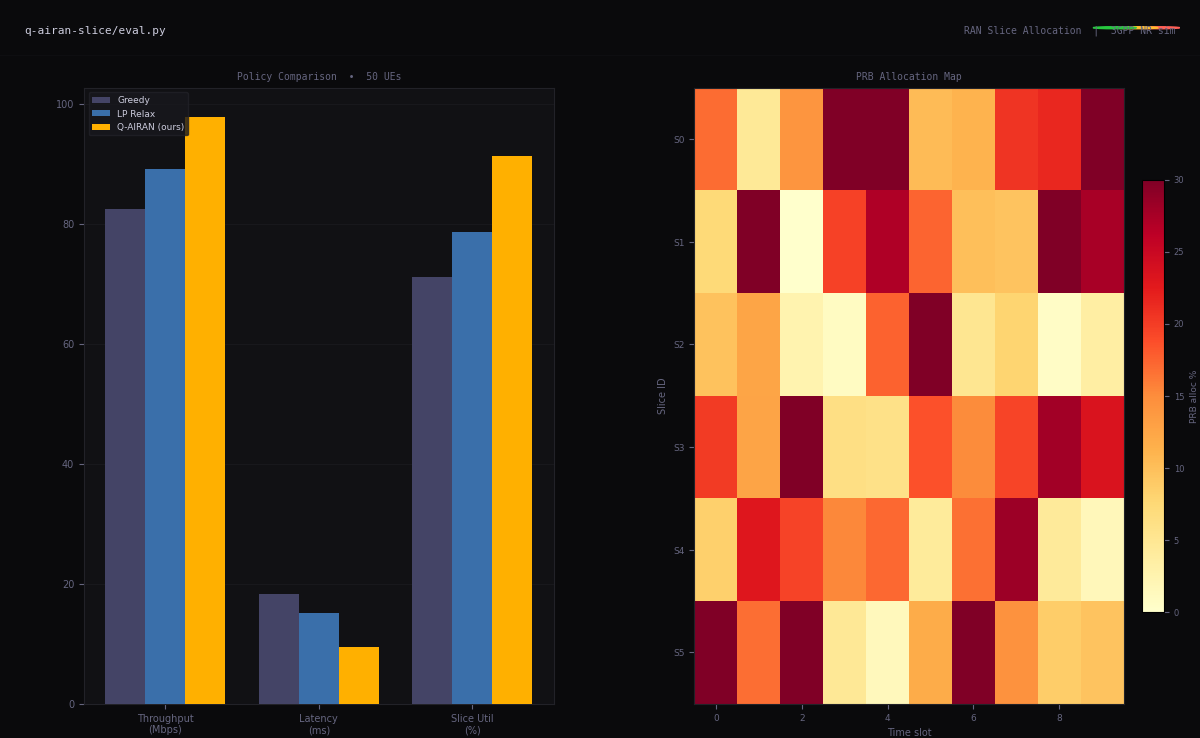

Quantum-inspired RAN slicing for 5G/6G. QUBO-formulated slice allocation across heterogeneous UE profiles. Benchmarked against greedy and LP baselines under traffic variance — outperforms both at scale.

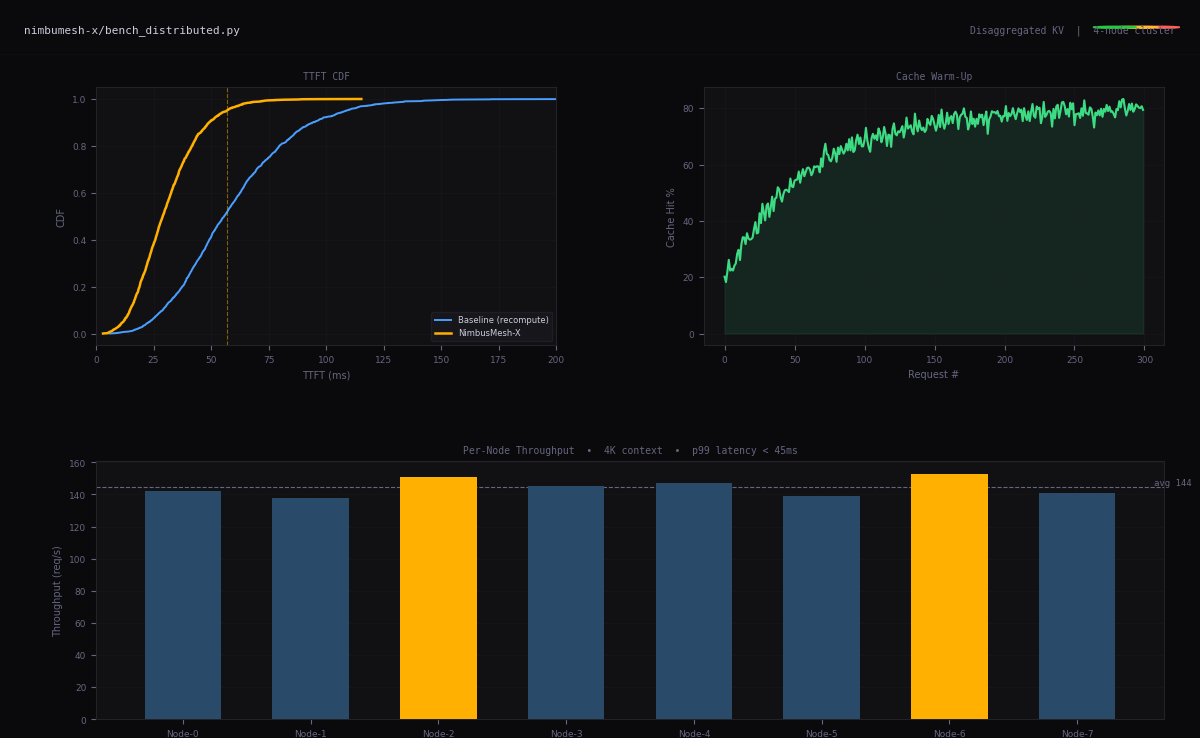

Distributed KV cache sharing layer for multi-node LLM serving. Eliminates redundant prefill recomputation across inference workers in disaggregated GPU clusters — reduces TTFT under memory contention.

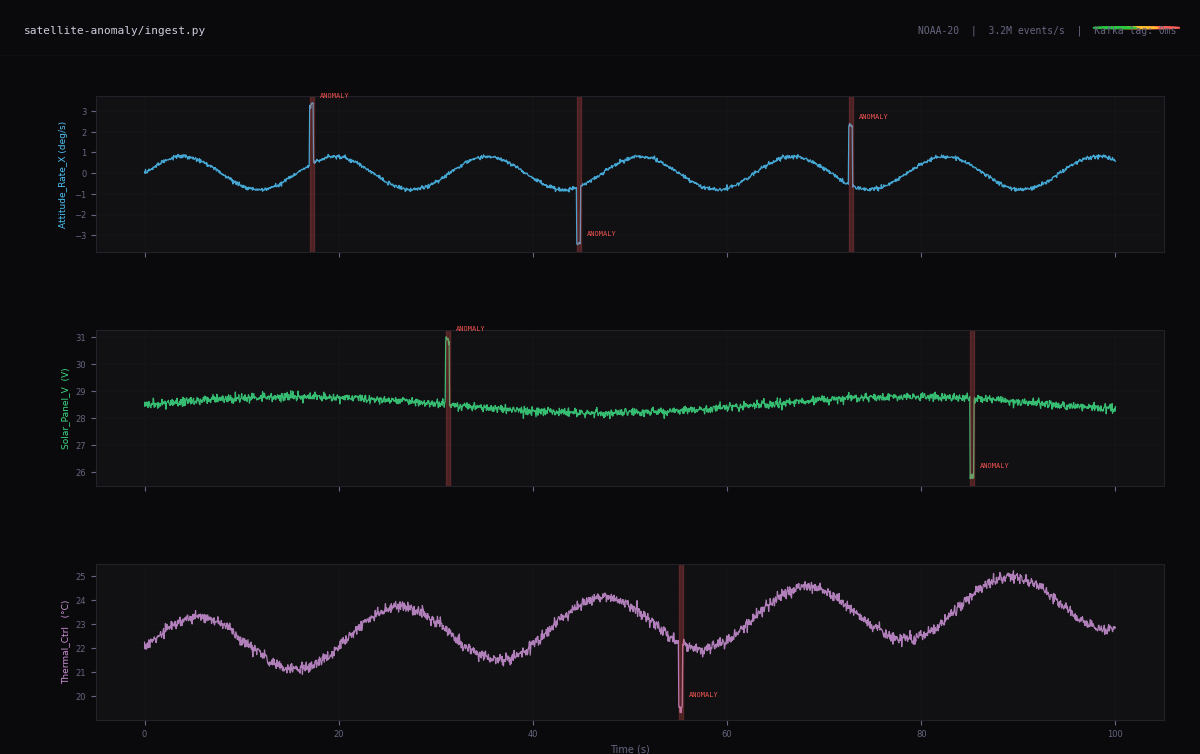

High-throughput telemetry ingestion and anomaly detection for satellite systems. Kafka-based streaming, real-time signal classification, reproducible benchmarks against historical mission data at scale.

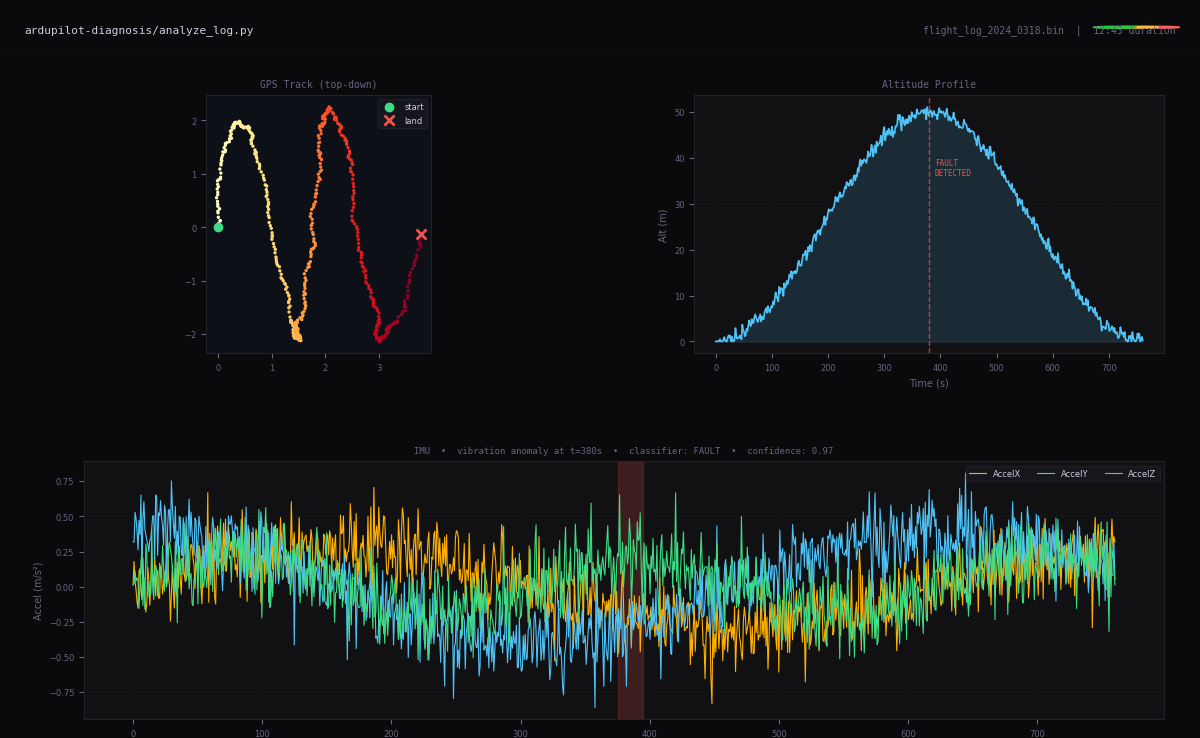

Automated flight log analysis and fault detection for autonomous drones. Real-time sensor fusion, ROS2 telemetry pipeline, sub-50ms anomaly classification running on embedded hardware.

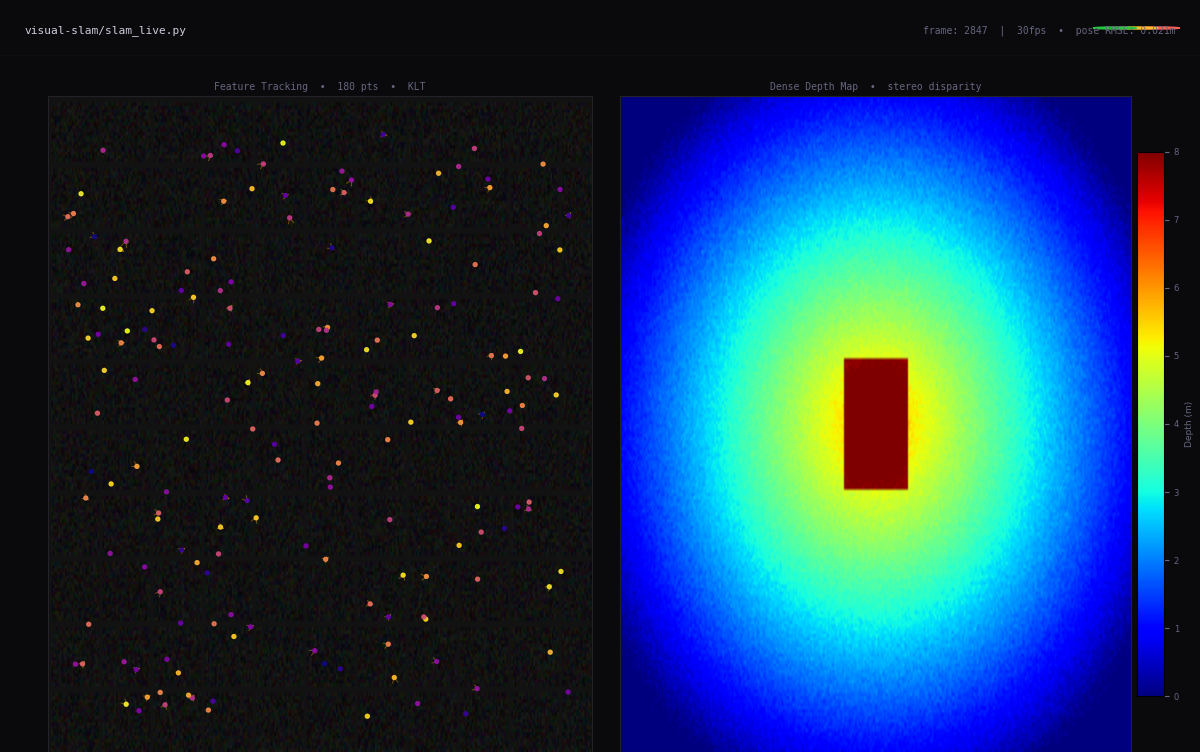

Real-time visual SLAM for autonomous systems. Feature tracking, pose estimation, and loop closure on live camera feeds at 30fps on embedded hardware — tested on indoor and outdoor environments.